Matrix determinant lemma

In mathematics, in particular linear algebra, the matrix determinant lemma[1][2] computes the determinant of the sum of an invertible matrix A and the dyadic product, u vT, of a column vector u and a row vector vT.

Contents |

Statement

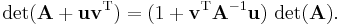

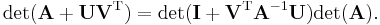

Suppose A is an invertible square matrix and u, v are column vectors. Then the matrix determinant lemma states that

Here, uvT is the dyadic product of two vectors u and v.

Proof

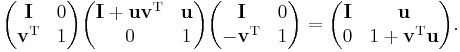

First the proof of the special case A = I follows from the equality:[3]

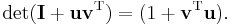

The determinant of the left hand side is the product of the determinants of the three matrices. Since the first and third matrix are triangle matrices with unit diagonal, their determinants are just 1. The determinant of the middle matrix is our desired value. The determinant of the right hand side is simply (1 + vTu). So we have the result:

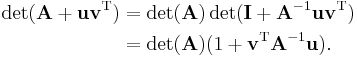

Then the general case can be found as:

Application

If the determinant and inverse of A are already known, the formula provides a numerically cheap way to compute the determinant of A corrected by the matrix uvT. The computation is relatively cheap because the determinant of A+uvT does not have to be computed from scratch (which in general is expensive). Using unit vectors for u and/or v, individual columns, rows or elements[4] of A may be manipulated and a correspondingly updated determinant computed relatively cheaply in this way.

When the matrix determinant lemma is used in conjunction with the Sherman-Morrison formula, both the inverse and determinant may be conveniently updated together.

Generalization

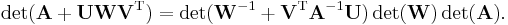

Suppose A is an invertible n-by-n matrix and U, V are n-by-m matrices. Then

In the special case  this is Sylvester's theorem for determinants.

this is Sylvester's theorem for determinants.

Given additionally an invertible m-by-m matrix W, the relationship can also be expressed as

See also

- The Sherman-Morrison formula, which shows how to update the inverse, A−1, to obtain (A+uvT)−1.

- The Woodbury formula, which shows how to update the inverse, A−1, to obtain (A+UCVT)−1.

References

- ^ Harville, D. A. (1997). Matrix Algebra From a Statistician’s Perspective. Springer-Verlag.

- ^ Brookes, M. (2005). "The Matrix Reference Manual (online)". http://www.ee.ic.ac.uk/hp/staff/dmb/matrix/intro.html.

- ^ Ding, J., Zhou, A. (2007). "Eigenvalues of rank-one updated matrices with some applications". Applied Mathematics Letters 20 (12): 1223–1226. doi:10.1016/j.aml.2006.11.016. ISSN 0893-9659. http://www.sciencedirect.com/science/article/B6TY9-4N3P02W-5/2/b7f582211325150af4c44674b5e06dd1.

- ^ William H. Press, Brian P. Flannery, Saul A. Teukolsky, William T. Vetterling (1992). Numerical Recipes in C: The Art of Scientific Computing. Cambridge University Press. pp. 73. ISBN 0-521-43108-5.